Creators and Guests

What is Stupid Sexy Privacy?

Stupid Sexy Privacy is a miniseries about how to protect yourself from fascists and weirdos. Your host is comedian Rosie Tran, and the show is written by information privacy expert B.J. Mendelson. Every episode is sponsored by our friends at DuckDuckGo. Tune in every Thursday night —or Friday morning if you're nasty — at 12 am EST to catch the next episode.

DuckDuckGo Commercial #3 (Game Show)

Announcer: Welcome back to the DuckDuckGo Privacy Challenge, where contestants get a chance to learn why millions use DuckDuckGo's free browser to search and browse online. Now for our first contestant, Julie. True or false? Google's Chrome protects your personal information from being tracked.

Julie: Hmm, I'm going to say ... true.

Announcer: Incorrect, Julie. If you use Google Search or their Chrome browser, your personal information has probably been exposed. Not just your searches, but things like your email, location, and even financial or medical information.

Julie: Wow, I had no idea.

Announcer: Second question. What browser can you switch to for better privacy protection?

Julie: Is it DuckDuckGo?

Announcer: That's correct. The DuckDuckGo browser keeps your personal information protected. Say goodbye to hackers, scammers, and the data-hungry companies. Download from DuckDuckGo.com or wherever you get your apps.---

Stupid Sexy Privacy Intro

Rosie: Welcome to another edition of Stupid Sexy Privacy.

Andrew: A podcast miniseries sponsored by our friends at DuckDuckGo.

Rosie: I’m your host, Rosie Tran.

You may have seen me on Rosie Tran Presents, which is now available on Amazon Prime.

Andrew: And I’m your co-producer, Andrew VanVoorhis. With us, as always, is Bonzo the Snow Monkey.

Bonzo: Monkey sound!

Rosie: I’m pretty sure that’s not what a Japanese Macaque sounds like.

Andrew: Oh it’s not. Not even close.

Rosie: Let’s hope there aren’t any zooologists listening.

Bonzo: Monkey Sound!

Rosie: Ok. I’m ALSO pretty sure that’s not what a Snow Monkey sounds like.

*Clear hers throat*

Rosie: Over the course of this miniseries, we’re going to offer you short, actionable tips to protect your data, your privacy, and yourself from fascists and weirdos.

These tips were sourced by our fearless leader — he really hates when we call him that — BJ Mendelson.

Episodes 1 through 33 were written a couple of years ago.

But since a lot of that advice is still relevant, we thought it would be worth sharing again for those who missed it.

Andrew: And if you have heard these episodes before, you should know we’ve gone back and updated a bunch of them.

Even adding some brand new interviews and privacy tips along the way.

Rosie: That’s right. So before we get into today’s episode, make sure you visit StupidSexyPrivacy.com and subscribe to our newsletter.

Andrew: This way you can get updates on the show, and be the first to know when new episodes are released in 2026.

Rosie: And if you sign-up for the newsletter, you’ll also get a free pdf and mp3 copy of BJ and Amanda King’s new book, “How to Protect Yourself From Fascists & Weirdos.” All you have to do is visit StupidSexyPrivacy.com

Andrew: StupidSexyPrivacy.com

Rosie: That’s what I just said. StupidSexyPrivacy.com

Andrew: I know, but repetition is the key to success. You know what else is?

Rosie: What?

Bonzo: Another, different, monkey sound!

Rosie: I’m really glad this show isn’t on YouTube, because they’d pull it down like, immediately.

Andrew: I know. Google sucks.

Rosie: And on that note, let’s get to today’s privacy tip!---

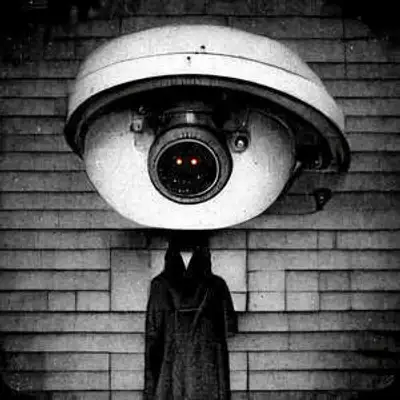

Rosie Tran, Host of Stupid Sexy Privacy: Glass holes. If we're not careful, they could soon be as far as the eye can see. So this week, BJ talks with Brittan Heller, a senior fellow at the Atlantic Council and an affiliate at the Stanford University's Cyber Policy Center. BJ and Brittan discuss the privacy and security issues concerning augmented reality, virtual reality, and other parts of the metaverse.

Now don't get us wrong, we're all excited here at the show about what augmented and virtual reality can offer. But as you might have guessed, there are a lot of security and privacy concerns. Particularly about the data collected through head-mounted displays, which are used for both AR, VR, and sex, as seen in the film Demolition Man.

That's why we're excited to share this interview with you. Because we don't want any more glass holes. We want to help build a metaverse that's accessible to everyone. Now some of you may not remember the glass holes, so to quickly recap, glass holes were entitled white guys. Ones walking around with a computer on their face and taking pictures and videos of everything around them.

In 2026, that might sound quaint, especially given that we all have smartphones and high-speed mobile internet access now. but there's still some friction involved in taking out your phone and filming someone. To be clear, you shouldn't do that. People are not content. In 2026, we hope you'll join us and not film or photograph strangers as a general practice.

That is, unless they're doing something really funny. And then only if you get their permission to share or post that video. But taking photos and videos with your glasses is a bit more problematic. That's because doing so is far more subtle, leaving people unaware you're recording them. That's how we got the term glasshole in the first place.

That brings us to this week's tip. Don't be a glass hole. I'm joking, but seriously, don't.

Actually, our tip this week is not a tip at all, but a book recommendation. That's right, we want you to read a book. You know, those heavy things that you use to kill spiders?

But we promise this isn't just homework. If you apply the lessons from this book, it could change your life. No, the book we're recommending is not The Secret. It's called Nonviolent Communication, and it was written by Dr. Marshall Rosenberg. A psychologist and founder of the Center for Nonviolent Communication. I actually recommended this book to B.J. a while back. And since the return of the glasshole is inevitable, he and I feel Dr. Rosenberg's book will be helpful. That's because we're all, as a society, going to need to get better at having difficult conversations with strangers. This includes conversations with subjects like, "Hey, jackass. Stop filming me with your glasses." Language, Marshall Rosenberg clearly would have approved of.

So go check out Nonviolent Communication. We hope you familiarize yourself with Dr. Rosenberg's advice, especially the parts on how to calmly confront someone who is doing something you don't like. Because if you haven't had that conversation yet in your life, we promise that you will.

So we're all going to need to do our part. Now let's get to this week's interview.

Our Interview With Brittan Heller

[Listener note: This interview was previously recorded in 2023. ]

B.J. Mendelson, co-producer of Stupid Sexy Privacy: Hi, Brittan. Thank you so much for joining us today on today's show. Would you be so kind as to take a moment to introduce yourself?

Brittan Heller, senior fellow at The Atlantic Council: Hi there, my name is Brittan Heller. My pronouns are she/her. I am currently an affiliate at the Stanford Cyber Policy Center and a senior fellow at the Atlantic Council.

B.J. Mendelson: And I just wanted to, before we dive in, since you have worked as an attorney ... Nothing that we talk about here should be construed as legal advice for people listening at home.

Brittan Heller: Correct.

B.J. Mendelson: So of course, that being said, I have to first ask you about Smellovision.

Brittan Heller: Smell-O-Vision is remarkable. I hosted a conference on the metaverse and how existing law applies to extended reality about a week ago at Stanford. And we got to go to the virtual human interaction lab. They have a sprung floor so you can get haptics like the earth shaking with an earthquake. And we got to try Smell-O-Vision. Smell-O-Vision, you put on the VR headset, you're looking at a campfire. You can hear it crackle. You can see the see the flames dance. And suddenly you can smell the burning wood and the smoke. You also are able to put a marshmallow on a stick and you can add toasting marshmallow to the mix. Really remarkable.

B.J.: Yeah, I'm just I'm a big, I'm a big XR VR person. And so I had to ask about that because I ... there's a book that the Stanford Virtual Reality Lab put out a couple of years ago that's just terrific.

[Editor note: That book is called Experience on Demand.]

But let me ask you, just going right into it, we think Apple might be rolling out their glasses finally this year. And so there's definitely some questions about privacy and safety with XR and things like the glasses. Could you touch a little bit on just some of the issues involved with things like eye tracking and head movement?

[Editor note: XR or Mixed Reality are often used interchangeably. Mixed Reality and XR or umbrella terms for Virtual Reality and Augmented Reality. VR and AR. Apple released their Apple Vision Pro not long after this interview was recorded.]

Brittan: Eye tracking and head movement are what differentiate XR hardware from your laptop or your phone. There have been studies at Stanford that show that with 20 minutes of recorded movement, you can be as uniquely identified with XR footage, as uniquely identified as you would be by your fingerprint. So it's it kind of takes existing biometrics law and pushes it to the edge.

I've done some studies as a fellow at Harvard Kennedy School, and beyond, where I basically detailed all of the information that you can get from eye tracking. And these are things like whether or not you're telling the truth, whether or not you are sexually attracted to the person that you're looking at, and whether or not you show pre-clinical signs of ailments like ADHD, schizophrenia, Parkinson's or Huntington's, and autism actually in some cases. And these are things you may not know about yourself or your doctor may not know about you yet. And it brings up to me issues of consent. The type of information you need to make XR hardware work, the digital exhaust can give you very, very private information.

And biometrics law is centered around protecting your identity, personal identifying information. I argue that we really need to think about the information that comes out of XR and try to use it to protect our mental privacy. So taking biometrics law and pushing the legal understanding of it to new concepts of digital rights.

BJ: Would this all fall under the category of neurorights? Is that sort of the.. catch all term for things like this?

Brittan: Yeah, that was developed by a couple researchers just, I think, around the same time. I've heard mental privacy in other human rights contexts, but they're pretty similar.

BJ: And so I got fascinated by this because we had the bioethicist from NYU, Dr. Arthur Caplan, talking about how HIPAA does not protect you at all.

Brittan: Correct.

BJ: Right, with all the information that's being collected. And then now we're adding things that could tell you whether or not you're sexually attracted to someone or something. You've got ADHD. It seems ... how prepared do you think we are for this discussion as a country?

Brittan: I think we're woefully unprepared. As long as I am on panels that start with what is the metaverse, I'm less confident that we are ready to talk about the new type of challenges that come out of spatial computing.

BJ: Yeah. And so let me ask you a little bit about governance in the metaverse. And right now, Facebook is trying to own it. Just my personal opinion, I hope they lose on that front. [Editor's note: They did!]

But right now, there's a lot of issues about how do we manage this stuff, how do we manage this information, how we manage the virtual creations of ourselves. Can you tell us a little bit about just the state of metaverse governance?

Brittan: Sure. The state of metaverse governance is unwell. I'll just put it at that. (laughs.)

I'm on the steering committee for metaverse governance at the World Economic Forum. And there's a couple of things that in my discussions with the leaders of companies and the leaders of governments, it becomes very clear.

One is that when people think about metaverse governance, they are not thinking about it as a whole ecosystem. And by that, I mean, there's there are different risk factors that come out of VR and AR, for example. Some of the other programs that are are coming out of AR, someone showed me one and I thought it was the most adorable real-time location tracking device I'd ever seen. When I told them that's what they'd built, they were horrified.

So AR has more immediate offline implications than VR might.

And second, I think most people think about XR as a social VR, and that's just one type of it. The first largest metaverse is alt-space. But the second largest is actually run by Accenture on a Microsoft mesh platform. And 750,000 people go to work every day in the metaverse. There's a very different kind of governance that you'll have in a workspace environment than you will in a social chat based environment. Then you will using XR to do physical therapy instead of trekking your way to the hospital during a pandemic.

BJ: Yeah. And so I mean, there's all sorts of issues with AR. I think the one that we touched on was bystander consent. And this is something I'm really interested in because it seems like that the flagship use for augmented reality glasses is the automatic translation of people speaking different languages. But it doesn't necessarily... there's no consent that's that's accepted at any point between the person you're talking to within the people around you. And I'm just curious about your thoughts on that.

Brittan: One of the things that I think is very, very interesting is trying to get civil society and government to understand the technical limitations on bystander consent.

And by that, I mean, pretend you have 100 points. And this is how I describe it to some digital rights nonprofits. Tell me how you would like to change a pair of glasses to do better bystander consent.

And we go through the exercises and they tell me what they want. And if they want a brighter light, I say, that's great. Up to a certain point. If you go above this threshold for brightness, your camera doesn't work. Do you still want to proceed? And they say, oh, no, well, we got to fix that. So it's thinking about the technical trade-offs.

If they want to make better notification, that means that the battery is going to last maybe six minutes, maybe 10 minutes. So you have to think about the limited resources engineering wise in these devices so that you can create user notification mechanisms in a way that is both smart, effective, and sustainable.

BJ: And talking a little bit about disabled rights as it relates to XR, AR, VR, we talked a little bit about how it's mostly white guys that are developing these tools and the hardware and technology. But that's leaving out the majority of people on this planet.

Brittan: It is. I have a piece that I put out in The information and it's called why XR is failing the people who could use it most. And I talk about how one in seven people on the planet are disabled. And there are... parent and non-apparent disabilities. And disability is unique in human rights law because it's a status that we can move in and out of throughout our lives for most people. If you are not in a wheelchair full time, but you hurt your leg and you're on crutches or temporarily in a wheelchair, you get a taste of what it's like to have challenges with your mobility. I write about how people with disabled status tend to be some of the earliest adopters of this hardware. But they're the last people that it's designed for. And that's actually a real shame for companies because when they think about total addressable market, they're really missing out. So it's not just a human rights issue, it's a smart business issue.

The piece also talks about how religious and ethnic minorities and women are also, they don't benefit from the current hardware design. And by that I mean, I interviewed a man who is a Sikh and he talked about he's a professional VR developer out of the UK. And he talked about how with the new professional edition of the headset that he uses, he can either choose to hear or to see. But because there's not an adjustable strap, you can't get it over his turban. And so he can't work, unless he decides to give up his religious signifiers and cultural headgear.

Women on average have a smaller interpupillary distance, so the distance between your pupils, than the average headset. This is important because interpupillary distance, if you've ever gone to the optometrist, they measure it. Because it's very important for determining your prescription for glasses. The reason that women experience more simulation sickness isn't because women don't play video games. It isn't because women just get more nauseous. It's because it's the way that these headsets are designed right now. It's like putting half of the population in the wrong prescription glasses.---

Book Ad

Hey everyone, this is Amanda King, one of the co-hosts of Stupid Sexy Privacy.

These days, I spend most of my time talking to businesses and clients about search engine optimization.

But that's not what this is about.

I wanted to tell you a little bit about a book I've co-authored with BJ Mendelson called How to Protect Yourself from Fascists and Weirdos. And the title tells you pretty much everything you would want to know about what's in the book.

And thanks to our friends at DuckDuckGo, we'll actually be able to give you this book for free in 2026.

All you need to do is go to the website stupidsexyprivacy.com and sign up to our newsletter.

Again, that website is stupidsexyprivacy.com and then put your name in the box and sign up for our newsletter. We'll let you know when the book and the audiobook is ready.

If you want a PDF copy that's DRM free, it's yours. And if you want an MP3 of the new audiobook, also DRM free, you could get that too.

Now, I gotta get outta here before Bonzo corners me because he doesn't think that SEO is real and I don't have the patience to argue with him. I got a book to finish. ---

Our Interview With Brittan Heller, Part 2

BJ: Right. I mean, what do you think that's attributed to? What do you think causes them to just overlook everybody? Is it ignorance or is it just, you know, they're trying to cater to one specific group over everyone else?

Brittan: I think it's a lack of diversity in the engineering teams because I am a very petite female; and it's very obvious to me when I put on a headset and it doesn't fit my face. So you need people throughout the engineering pipeline who see the world through different vantage points to let you know what is obvious to them that is being overlooked.

Oh, another example of that is there was a researcher from MIT who went to Africa to do her dissertation research about women and economic engagement. And she was using Oculus Go. 50% of the time when she put the headset on her test subjects, the strap snapped 50% of the time. And that is something that really should be clear in the design process. So you think about design justice, how it fits with human rights, and how both of those intersect with digital rights. And that's where I think we need to get to.

BJ: Absolutely. Let me ask you one more question on the metaverse front. When we talk about Roblox, this is something that I've seen my nieces play with and I know it's very popular with kids. But there seems to ... I sound like a tinfoil hat person saying this but ... there seems to be like this crossover of terrorism and extremism in the metaverse, right? Like using things like Roblox to recruit people. Could you elaborate a bit on that?

Brittan: Sure, that's not a new technique. We've seen with online extremism for many years, people using video games as a vector to reach out and try to recruit people. When people are recruited into an ideology like that, they're not being offered an ideology based on terrorism. What they are being offered is a community. So I worked with a lot of people who are called Formers. So former white supremacists, former extremists, and they talk about how people reached out to them because they were looking for a place to belong. So, when you ask them to a camp later on, it's not like asking them to give up views they don't intellectually believe with, it's asking them to leave friends and family behind. And that's why it's sticky. And you can see in a gaming environment where you attract people of all kinds, that standing out as kind of a lone wolf or somebody without a strong community base, you can't me different people that way. So that's, that's the vector of that. That's how it kind of works.

BJ: In terms of metaverse governance, what do you think in the nearterm what the solution might be to ... I mean, there's so many different. Just in 15 minutes, we identify like all these different things. But like, in terms of managing it, what do you think the solutions might be in near term?

Brittan: In the near term? I have an infant daughter, but if I had an older child, I would make going into the metaverse kind of a family activity. And by that, I mean, there's the ability to cast what your child sees onto a screen. I would do that behind them so you could see what they see. And I would also make it so that the headset becomes a tool and not a toy, if that makes any sense.

BJ: Yeah, it absolutely does. I like that approach. Yeah, I really like that. Let me switch gears just a little bit because I'm just watching our time.

You were at a conference discussing an issue involving a Wi-Fi sex toy and how there's no jurisdiction right now to prosecute someone for hacking into it. Could you just tell us a bit about that?

Brittan: And now for something completely different.

BJ: That's right.

(Both laughing.)

Brittan: Yeah. The discussion came from the existing Law and Extended Reality Conference. And Amy Stefanovich, who's the VP of US Policy at the Future for Privacy Forum, was talking about work that she had done where sex toys were found to not have, under existing law ... You wouldn't be able to make a case that somebody had assaulted you if they were to hack into your device and turn it on without your consent. Or you wouldn't be able to say that it was an actual assault because assaults required a physical person to physically touch you. That's the way that we saw cyber harassment laws working at first. And in many states, it's still this way where someone will just add cyber to an existing statute and it creates kind of perverse outcomes.

BJ: So is there a, what should, ... if someone were to find themselves in a situation like what, can they do about that?

Brittan: Oh A situation where they feel like they're caught in a legal gap.

BJ: Yeah, like you want to ... You want to be able to take action, but you, but there doesn't seem to be a statute or something that you can read on to do so.

Brittan: That is a situation where I feel bad for the people involved because there may not be a means to hold somebody legally accountable. You may not be able to involve law enforcement, but I would definitely call your elected representative and say, "hey, you're supposed to write laws. Here's a situation where the law doesn't work." That's why I have a job. I advise a lot of companies on legal holes.

I do a lot of academic writing and teaching about the holes that emerge, but it's really impossible to come up with them all.

BJ: It's got to be frustrating too, I imagine, because it just seems like, you know, we talked about this when we did our pre-interview, you know, when you look at the questions that our congressional representatives were asking Mark Zuckerberg, like at the level that they were asking those questions versus the problems that we need to face. There's a significant gap there.

Brittan: Correct. I think letting letting your representatives know that you're actually interested in making sure laws about the metaverse are in attune with other types of laws or training for your local police department, because that's ... I'm a former prosecutor and that's the interface that most people will have. And it sucks when you go to the police and and they say, I'm sorry, we're not trained in that. We don't deal with that. Or just turn off your screen.

BJ: Yeah. This is something that I've because we've been doing the privacy audit since the show began. And this is something that's come up a lot with the police just kind of saying to ignore it or to block that person or turn off your computer. There seems to be a lack of training when it comes to things like this. How do we fix that? Is it just better funding, better funding and better training for them?

Brittan: Better funding and better training and also interfacing between federal authorities and state authorities. And that sounds very odd, but I was a federal product prosecutor and what they called the chip, the computer hacking and IP specialist for human rights and special prosecutions. And that meant I became an expert on electronic evidence and prosecuting cybercrime as it related to human rights. And I have to say there were a lot of resources that the FBI had that, awareness just wasn't out there to local departments.

Also, many, many cyber crimes get misfiled when you go to the local police departments and they'll put cyber harassment and other things like that down as domestic violence. And it's not a clear fit because the statutes often require physical contact between a perpetrator and a victim. And people will go through the stack and just say, 'I don't know what to do with this.' So better training in the areas where people will be most impacted by cybercrime would be helpful.

BJ: Let me ask you a bit about what should someone do, given that the police aren't quite where we want them to be. What should someone do if they find themselves in a situation where they're being stalked with harass? Like, what are the steps that they can take?

Brittan: One is keep a record. So whenever you get some concerning or strange contact, keep a log of who contacted you, screen names, etc., when it happened and what the content was. Because then you can establish a pattern and practice of this behavior. So it's really harder for police to say, you know, it's just a one off thing.

Second, I think you can let friends and family know what's going on so that they can they can be aware and help you. And oftentimes people will get your information through social engineering, meaning they will ask people close to you questions and information that seems innocuous, but that they can can use to either locate you or torment you.

And third is going and Googling yourself. Create a Google Alert so you can see what type of information is out there in the public realm. And if you've never done that, it's very illuminating. You also can go to information aggregators. Things like Spokeo that collect information from all over the web. They will scrape information from public records, from social media, from Internet websites. And put it all together so people can buy that for $19.95.

A good way to reduce incidences of harassment or identity theft or things like that is to go and remove yourself from those databases. And it takes a little bit of legwork, but it's very effective.

BJ: Yeah, we've been recommending people use JustDeleteMe. Yes. Just because there's 600 data brokers, you know, like there's so many of them. We did the math, it would take like a solid month. Right? Like if that was your full-time job, to get through them. Which is crazy to me that there's no, there's no just easy opt out unless you live in California or Virginia right now.

Brittan: Well, the good news is there are other comprehensive state privacy laws coming. Colorado is a place to watch. So is Texas. And I believe there's six other bills coming up in the next year.

BJ: Well, that's terrific to hear. You just made my day.

Brittan: That is very rare that somebody says that to me, given my line of work.

BJ: Well, you bet you've made mine and I think you've made every one listening to this because we've talked a little bit about our frustration of just having California. And I think people don't really realize that Virginia also has like a similar law.

Brittan: Yes.

BJ: But there's only two of them. So I think knowing that more is coming, I think is is terrific.

Brittan: And if you look at the biometrics realm, Illinois is the leader in that. Oh, really?

BJ: The Illinois state laws is the best for biometric privacy. So other states have been looking at that and copying it as well.

BJ: Well, that's great. We I've managed to cram everything into 25 minutes. I was worried I wasn't going to. So let me let me ask you real quick. What what is something that you don't usually get asked in any of these like this that you would love to touch on?

Brittan: Oh, gosh. Why I work with extended reality.

BJ: Yeah, I'd love to know.

Brittan: Many people talk about, like, you had a great career as an international criminal prosecutor. Why are you playing with virtual reality? Isn't it a toy? And I never get to talk about why I love it. I just, think it's the closest thing to magic we have. And to me, it's really a tool that augments human potential. And I'm very hopeful that the next phase of spatial computing is going to bring new art and new ways to connect with people all over the world; and better education and new forms of civic participation. And that's why I do what I do, because I call myself a tempered optimist. I'm at heart hopeful.

BJ: I like that. I mean, for me, I always see it as, you know, we have a loneliness epidemic. And to me, I see VR if we can just get it right as a potential cure for that.

Brittan: I have hope. Well, this was terrific. I really appreciate you taking the time. And I'm glad that we got everything covered. Me too. Thank you very much. This was a lot of fun.

BJ: Thank you.---

Live Read #6 Data Brokers

Rosie: When your friends at Stupid Sexy Privacy are out in the world, we often hear two things that we want to address:

The first is, “I don’t want to sound like a Karen, because I’m concerned about my privacy.”

You’re not a Karen if you’re concerned about privacy.

Privacy is a fundamental human right.

So if you care about privacy, you care about other people.

That makes you the complete opposite of a Karen.

The second thing we hear is, “All my stuff is out there already and there’s nothing I can do about it, so why bother?”

And we totally get that feeling.

Most of us are burnt out, working multiple jobs, and taking care of kids or elderly parents. Or both.

Managing our information can feel like just one more thing to add to that list.

The good news is, managing your privacy is not an all or nothing thing.

You can do a little at a time, and still take back control of your privacy, security, and anonymity.

The easiest place to start is by cleaning up the data that’s out there.

This is especially important so that it can’t be used to deny your health insurance claims or make you pay more for rent.

Here’s how that works:

Data brokers collect and sell personal data, which they aggregate from public records, Internet trackers, and other sources.

Many of these sites will display some amount of your personal information, for free.

And if a human can see this data?

So can the bots and scrapers. Many of whom use that data to render adverse financial decisions against you, without you ever knowing.

That, my friends, is why you should always appeal a health insurance denial when you get one. But we’ll talk about that in a future episode.

For now, our friends at DuckDuckGo offer a solution that we want you to know about.

As part of the DuckDuckGo subscription plan, they offer Personal Information Removal services.

The kind that can help find and remove your personal information, such as your name and address, from data broker sites that store and sell it.

This helps to combat identity theft and spam.

Access to Personal Information Removal comes with the DuckDuckGo subscription plan.

And here’s the best part …

There are a lot of data removal services available. The thing is, you often have to give them personal information, including your driver’s license, which they then store on their servers.

DuckDuckGo doesn’t do that.

All of the information you provide them is stored locally on your device. This includes the monitoring and processing of removal requests.

It then scans these sites on a regular schedule to minimize the risk of your information reappearing.

After the initial scan, you can track the progress of ongoing removals, keep tabs on the total number of records that have been removed, and see the site-scanning schedule on your personal dashboard in the DuckDuckGo browser.

While it doesn’t cover all of the data broker websites out there, DuckDuckGo monitors over 50 of those websites, with more being considered.

You can sign up for the subscription via the Settings menu in the DuckDuckGo browser, available on iOS, Android, Mac, and Windows.

Or via the DuckDuckGo subscription website: duckduckgo.com/subscriptions.

This service is currently only available in the United States and on desktop.---

Stupid Sexy Privacy Outro

Rosie: This episode of Stupid Sexy Privacy was recorded in Hollywood, California.

It was written by BJ Mendelson, produced by Andrew VanVoorhis, and hosted by me, Rosie Tran.

And of course, our program is sponsored by our friends at DuckDuckGo.

If you enjoy the show, I hope you’ll take a moment to leave us a review on Apple Podcasts, or wherever you may be listening.

This won’t take more than two minutes of your time, and leaving us a review will help other people find us.

We have a crazy goal of helping five percent of Americans get 1% better at protecting themselves from Fascists and Weirdos.

Your reviews can help us reach that goal, since leaving one makes our show easier to find.

So, please take a moment to leave us a review, and I’ll see you right back here next Thursday at midnight.

After you watch Rosie Tran Presents on Amazon Prime, right?

-30-