Creators and Guests

What is Stupid Sexy Privacy?

Stupid Sexy Privacy is a miniseries about how to protect yourself from fascists and weirdos. Your host is comedian Rosie Tran, and the show is written by information privacy expert B.J. Mendelson. Every episode is sponsored by our friends at DuckDuckGo. Tune in every Thursday night —or Friday morning if you're nasty — at 12 am EST to catch the next episode.

00:00

Here's three reasons why you should switch from Chrome to the free DuckDuckGo browser. One, it's designed for data protection, not data collection. If you use Google Search or Chrome, your personal info is probably exposed. Your searches, email, location, even financial or medical data. The list goes on and on. The free DuckDuckGo browser helps you protect your personal info from hackers, scammers, and data-hungry companies. Two, the built-in search engine is like Google.

00:30

but it never tracks your searches. And it has ad tracker and cookie blocking protection. Search and browse with ease with fewer annoying ads and pop-ups. Three, the DuckDuckGo browser is free. We make money from privacy respecting ads, not by exploiting your data. Download the free DuckDuckGo browser today and see for yourself why it has thousands of five-star reviews. Visit DuckDuckGo.com or wherever you get your apps.

01:00

Welcome to another edition of Stupid Sexy Privacy, a podcast mini series sponsored by our friends at DuckDuckGo. I'm your host, Rosie Tran. You may have seen me on Rosie Tran Presents, which is now available on Amazon Prime. And I'm your co-producer, Andrew VanVooris. With us, as always, is Bonzo the Snow Monkey.

01:22

I'm pretty sure that's not what a Japanese Macau sounds like. Oh, it's not. Not even close. Let's hope there aren't any zoologists listening. That was fairly simple. A lot of people think they're born better than others. I'm trying to prove it's the way you're raised that counts. But even a monkey brought up in the right surroundings can learn the meaning of decency and honesty. OK, I'm also pretty sure that's not what a snow monkey sounds like.

01:49

Over the course of this mini-series, we're going to offer you short, actionable tips to protect your data, your privacy, and yourself from fascists and weirdos. These tips were sourced by our fearless leader. He really hates when we call him that. DJ Mendelson. Episodes 1 through 33 were written a couple years ago. But since a lot of that advice is still relevant, we thought it would be worth sharing again for those who missed it. And if you have heard these episodes before, you should know we've gone back and updated a bunch of them.

02:17

even adding some brand new interviews and privacy tips along the way. That's right. So before we get into today's episode, make sure you visit StupidSexyPrivacy.com and subscribe to our newsletter. This way you can get updates on the show and be the first to know when new episodes are released in 2026. And if you sign up for the newsletter, you'll also get a free PDF and mp3 copy of BJ and Amanda King's new book, How to Protect Yourself from Fascists and Weirdos.

02:44

All you have to do is visit stupidsexyprivacy.com. Stupidsexyprivacy.com. That's what I just said. Stupidsexyprivacy.com. I know, but repetition is key to success. You know what else is? What? Oh, Bonzo. Eat your pablum like a good boy, and pretty soon you'll grow up to be a big, strong, handsome man just like your daddy. And you'll have Swedish pancake, too. I'm really glad this show isn't on

03:12

to because they pull it down like immediately. know Google sucks. And on that note, let's get to today's privacy tip.

03:28

Hey friends, it's Rosie Tran, and this week you're getting a brand new privacy tip and interview. This one featuring Hagen Blix, the co-author of Why We Fear AI on The Interpretation of Nightmares. We thought this interview would be a nice follow-up to last week's interview with Dr. Uri Galle. But before we get to Hagen's interview, we wanted to tie up some loose ends. Season one of Stupid Sexy Privacy will end on April 16th. The all-new second season will debut on April 23rd. The following are some leftover items we wanted to make sure we covered before the season ends. One.

03:57

Something we're hearing a lot from people is how organized they are. And then of course, when we ask them a few questions and we find out they're not organized at all. So here's a simple test everyone can use to figure out if they are organized. Do you have right now 10 of your neighbors in a signal group chat? If the answer is no, you're not organized. What you have in set is probably a loosely affiliated group of people, one that will fall apart at the first sign of trouble. If Miss BJ's burner phone 101 presentation, where we discuss the neighborhood signal groups, we encourage you to go back and listen to that episode.

04:26

Season one of Stupid Sexy Privacy was all about how to protect yourself from fascists and weirdos. Season two is all about how to fight the fascists and weirdos. So that Burner Phone 101 episode is a preview of what's coming next. Two, this one makes us crazy, but a lot of people still don't know how tariffs work. So we just want to stress that a tariff is a tax that you pay. You are paying it, you, nobody else, not Canada, not Mexico, not China. And according to Axios, you and I are each owed at the time of this recording about $1,700.

04:53

That's how much extra you and I paid since January 20th of 2025 on groceries and everything else because of tariffs. Three, body cameras like communism work in theory. In theory, a law enforcement agent wearing a body camera is a great idea, but right now law enforcement frequently turn off their cameras, rendering them useless. So asking ICE agents to wear body cameras when a bunch of them had body cameras on when they murdered Alex Preti and Renee Goode is about as stupid as the tariffs. The only way to rein in ICE is to abolish ICE. That's what everyone should be demanding right now.

05:20

Four, remember that you always have a choice. It's very easy to feel overwhelmed. That's a tactic used by authoritarians all over the world. They want you to feel like there's nothing you can do, but there's always something you can do. For example, this is the number of the U.S. Capitol switchboard, 202-224-3121. You can call that number and ask to be connected to your senators and your House representatives. Tell these people what's on your mind, like how all $75 billion taken from Medicaid and SNAP to fund ICE should be put back into Medicaid and SNAP. That's a thing you can do right now, because we'll tell you a secret.

05:49

Politicians, no matter the political party, are cowards. If enough people call to yell at them, they'll remember who's the boss, and that boss is you, not them. And while it's true one call may not make a difference, a bunch of people start calling, or if you start calling every week, that makes all the difference in the world. Volume of momentum is what counts, a point we will return to in season two. Five, okay. Last but not least, we recently ran our how to stay safe while going out on a date episode. There was one thing we wanted to add and that isn't discussed during that episode, which are Bluetooth trackers.

06:18

Stuff like Apple AirTags, basically. The question being, what do you do if someone plants one of these trackers on you? We're going to give you two options. This is the official Apple version of what to do. And for Android users, you can download an app released on Apple called Tracker Detect. But if you have an iPhone, you want to make sure the software on your phone is up to date and then go to Settings, tap Privacy and Security, and select Location Services. Then there are some additional steps you need to take. They are as follows. Turn Location Services on. Scroll down and tap System Services.

06:48

then turn significant locations on to be notified when you arrive at a significant location, such as your sex engine in Columbus, Ohio. Go to Settings, then tap Bluetooth, turn on Bluetooth, then go back to Settings, tap Notifications, then tap Tracking Notifications, turn on Allow Notifications. Finally, turn off Airplane Mode. If your device is in Airplane Mode, you won't receive tracking notifications. Following these steps will help you spot any device tracking you using Bluetooth. Apple also provides official documentation on how to find the serial number of the Air tag tracking you.

07:18

which you can then report to the police. We included links in today's show notes on how to find the serial number and how to disable the battery inside the AirTag. You want to disable the battery after you have identified the serial number. That's the official way to handle being stalked by an Apple AirTag. Here's the second option, which covers other sorts of Bluetooth tracking devices. If you have an Android device, you can download an app called AirGuard. AirGuard can not only help you spot a device, but also its recent activity, which is super helpful, especially in figuring out where the device was planted on you.

07:48

For Apple users, can download the app called LightBlue. LightBlue is also excellent in finding Bluetooth devices around you, even if it's just something you lost that connects to Bluetooth, like your AirPods. Now let's get to our interview with Hagen Blix about why we fear AI. Take it away, BJ. All right. Hagen, thank you so much for joining us on Stupid Sexy Privacy. I'm hoping you might be willing to take a moment just to introduce yourself to our listeners. Sure. I'm Hagen Blix. I am a...

08:15

cognitive scientist by training. I'm a linguist. But I got interested in all the AI stuff initially as a linguist who was like, what's going on with these language models? How are language models similar or different from what humans do? That was back in, I don't know, 2018 or something. then, you know, language models entered the world of real people outside of academic labs. And then I got interested in, okay, what are these?

08:43

doing in the political economy in the world, how we should be thinking about these. And I had a lot of friends who were working in these realms, know, industry was hiring in those realms. And me and my friend, Ingeborg Glimmer, got together and thought about this a lot and ended up writing a book about those kinds of questions about AI, the discourse of AI, and the real impacts and how we really should be thinking about this from an economic and a political perspective.

09:13

And the book is called Why We Fear AI on the interpretation of nightmares because we kind of take a guide through stories about AI being scary to look at how we really should be thinking about AI. Yeah, I really appreciate it. Because the book itself is not very long. I think it's about 140 pages or so. But the breadth and depth of what you covered, I thought, was fantastic.

09:41

Just because we only have so much time, let me focus a bit on first, this question, which is, at this point, you know, people are saying the stupid sex of privacy kind of, they don't like generative AI. They think what's being hyped as generative AI is just garbage that produces garbage results. And that this is a bubble that's going to pop like Web3 and crypto. Do they have that about right?

10:07

You know, I think there is definitely a bubble being produced. I sometimes worry when that's people's immediate take that invites a certain kind of comfort in the sense of like, it'll blow over. It's probably going to be a bubble in the same way that the dot com bubble was a bubble, which means it's going to create winners that will continue pushing these technologies onto us. So I like to think of AI as primarily a weapon of class war from above.

10:35

Yes, that is used to depress wages and to de-skill people. And even if some manufacturers of this particular weapon go bankrupt, the class war will continue. The weapon will still be around. And usually when a bubble pops, we should be aware that, you know, the people who bear the social costs of that are usually normal working people and not the billionaires that make these

11:00

sometimes absolutely insane investment decisions. So I think that's a partial truth, but I think that is a truth that doesn't yet point us to a way out of the situation. Right. So would you say that maybe we're too complacent right now about this stuff and maybe we should be speaking up about how this is a weapon class warfare?

11:25

I don't know if people are complacent, but I think, you know, the way these technologies hit people always comes at different speeds and unevenly. I think when we look at, think what lots of people in lots of sectors should be doing is look at what have artists been doing, right? Artists have been at the forefront of this. em Actors have had em in their strikes and then negotiations over contracts like SAG-AFTRA.

11:54

the Actors Guild have talked about what kind of rights are relevant with respect to AI, right? They have thought about, oh AI produces people's likeness. Can we and our collective bargaining block fake actors from being used? Can we block AI from impersonating us? Can we keep our own rights to ourselves? So I think, and you you see a lot of uh artists of all kinds online, whether they're like...

12:21

have the opportunity to be in union structures like actors or not. And they're very aware of what this is. They're very aware that this is a method to undermine the ways that they make their livelihoods to transform, let's say, the job of a designer from someone who autonomously structures their own work.

12:43

is in control of their own instruments to someone who's easily replaced where, you know, an AI generates the first draft and then people hire in a gig economy someone to fix it, right? It's way of taking agency away from people and reducing the bargaining power that people can derive from their skills. So I think we should be, if we're working in other sectors, very aware of what's going on there and try to learn from each other quickly.

13:13

Do you think there's specific, like, so let me ask, have you found yourself deciding, okay, I'm not going to use this stuff at all? Or are there still specific instances where you're like, okay, maybe this use case is okay? uh I mean, personally, I rarely find them particularly useful. But I also feel like...

13:39

I'm not particularly personally not particularly interested in being the kind of like critic that just tells people not to use things. You know, if other people find uses in these, then that's something where we should be thinking through the politics and the economics and the effects of it. But I think, you know, waving your finger and being like, it's bad to use this is just generally not good politics. And if you want to have one use case that I personally find is that they're excellent as a thesaurus replacement because I'm like, oh, I have this word in my head and I can't think of what it is and not a

14:08

I'm not a native English speaker either. And you're like generate 20 words that mean roughly this meaning. And it's a thing that language models are good at precisely because verification is very easy for me, right? I already know that there's a part that I'm looking for. I just can't get to it. I have like retrieval issues. And so I look at the 20 words and I immediately know whether one of these is the one that I want, right? So I think a lot of AI tasks, if we look at it purely from the task thing, that they're often good at the

14:37

If the asymmetry is where production is hard and verification is easy, but you know, is that a good reason to cluster to build data centers everywhere and increase our energy consumption in a time when climate change is already a problem? No, I don't think so. Absolutely right. Like this is a climate emergency. there's, there is absolutely this, this cost benefit analysis with it that I don't think enough of us are doing. And we're going to get to that. that's, that's a

15:06

Big thing I wanted to talk to you with. Before we get there though, I wanted to see if I got this right because I came away from the book with the impression that it was the managerial class that has more to fear from what we refer to as AI than the rest of us. Is that right? I think the managerial class and other people in professional jobs, right? In a sense, like the first way I think that's useful to think about it is just to say, oh

15:36

It brings the logic of industrial production to the realm of language, right? This is a language model is an instrument for industrializing the production of language and that and images. And that can affect many kinds of jobs, but management is certainly one of those, right? If we're thinking about, a sense, an even broader way of thinking about it is that it like produces a new scale of information processing, right? So.

16:06

I do think if we think about, we talk about an example of Amazon and how they use a video classification AI in the warehouses, right? Where Amazon is using a classification software that learns from hundreds of thousands of hours of video of people stowing in their warehouses, what stowing looks like. And then they start having other workers label these videos. And then the AI learns to...

16:34

label videos of people with, this person is making a mistake or this person is a very quick store, et cetera. Right. And there's a, an automation of an oversight function of a managerial function. Right. So I do think that certainly lower level management, um, oversight functions are getting embedded into these technologies and are making certain classes of managers superfluous. And another level, I think at which we can think about this

17:04

is to say, yeah, management itself is historically always trying to centralize knowledge about how labor processes work. Sometimes that's implicit, sometimes that's explicit as in all the managerial schools that follow Taylorism or scientific management, where the principals are literally saying management has to centralize all knowledge of labor processes among the managers.

17:34

And of course, we can think about an AI and an LM as an attempt to centralize knowledge of certain labor processes, not within a management structure, but in a kind of machine in an algorithm in a data center, right in the in something that is capital itself, right, something that is the machinery, right. So yes, I think there are many ways in which AI really represents an attempt

18:03

You say in the abstract of the forces of capital to put the power not just into the representatives of capital like managers, but to put it directly into a thing that can be owned like a giant collection of matrices on a server that runs on these data center farms. yes. Right. Let me ask you, because I feel like there's been within the past five years, we've now seen between COVID-19 where people didn't need

18:33

to work from the office and now with what we're calling AI, which is really the LLMs and chat models, it very much seems like we don't need managers. That's sort of the impression I came away with. I was just curious to hear your thoughts on that. That's a nice question. I think the question, in a sense, raises the extra question of who doesn't need managers, right? Because managers...

18:57

You know, you can say there's an objectively needed function of managers. Sometimes managers coordinate different workers and maybe that's generally needed. And then there's the function of managers, which is to be producing oversight for, you know, the company owners. They're the representatives of the owners of let's say stock, right? And there's a conflict there. In a sense, there's often a way in which the workers don't actually need a manager to do their work, but the

19:26

managers needed to make sure that the workers uh are replaceable, that knowledge doesn't get centralized among positions that would give people power to influence their workplaces. Managers are there to make sure that people work as hard as possible and don't start to balance other things that might be important in their lives, right? So there's a sense in which a lot of management is actually about.

19:53

managing conflict that is inherent to a system in which some people sell their ability to work and others buy other people's ability to work in order to make a profit like companies do, right? And that part, I think, if we're thinking about automating the second part, that it may be true that quite a few of these managerial functions are

20:18

getting automated by these algorithms, but that doesn't make me feel good, right? That makes me feel worried in a sense. Hey, everyone. This is Amanda King, one of the co-hosts of Stupid Sexy Privacy. These days, I spend most of my time talking to businesses and clients about search engine optimization. But that's not what this is about. I wanted to tell you a little bit about a book I've co-authored with BJ Mendelsohn called How to Protect Yourself from Fascists and Weirdos.

20:47

and the title tells you pretty much everything you would want to know about what's in the book. And thanks to our friends at DuckDuckGo, we'll actually be able to give you this book for free in 2026. All you need to do is go to the website, StupidSexyPrivacy.com and sign up to our newsletter. Again, that website is StupidSexyPrivacy.com and then put your name in the box and sign up for a newsletter. We'll let you know when the uh

21:16

book and the audiobook is ready because if you want a PDF copy that's DRM free, it's yours. And if you want an MP3 of the new audiobook, also DRM free, you can get that too. Now, I've got to get out of here before Bonzo corners me because he doesn't think that SEO is real and I don't have the patience to argue with him because I got a book to finish. Let me talk about that, Laurie, for a second.

21:46

I mean, so the book is called Why We Fear AI. And I know that you spent 140 pages explaining this, but I'm wondering how much of the fear is driven by the uneven distribution of the LLMs and chatbots or just technology in general. I was hoping you might be able to elaborate on that fear a bit and where it comes from for our listeners.

22:09

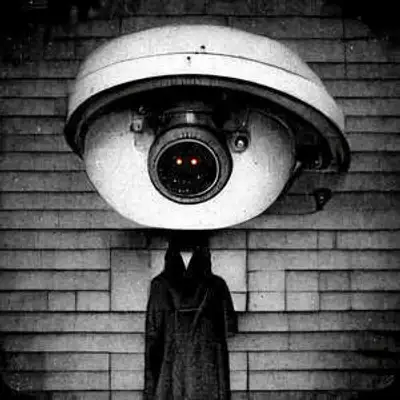

Yeah, I think in many ways, something that we see with these kinds of things when we see them being used in immediately hostile forms, right? In a sense, the algorithm that constantly watches the Amazon worker is hostile, or maybe even more obvious uh if you have algorithms like the ones that have been used in the war on Gaza.

22:31

that just determine whether somebody is a bombing target where the military says we want to bomb X many targets today, please give us a list and the algorithm that has been going through everybody's phones, records, et cetera, just puts a number on it says this person has a 73 % chance of being affiliated with Hamas. And you'd make a cutoff and say you start bombing those people, right? These kinds of ways in which these algorithms can immediately serve

22:59

a power that is imposed upon people either with deadly effects or with effects of labor control. And we often see that these happens in what Virginia Eubanks in her amazing book, Automating Inequality, called low rights environments, right? The workers in Amazon warehouses are often the most replaceable and thus they have few rights in comparison to, let's say, either workers

23:28

that have a special skill set that uh produces bargaining power that they can use to have control over their jobs. not in this low rights environment. And of course, there's hardly anything that is more of a low rights environment than being a civilian in a war zone. But we very often see that these kinds of technologies over time shift into other sectors.

23:57

I would say when it comes to military technology, it's very common to see military technology go through borders, which are kind of these liminal zones between the foreign and the domestic and into the domestic. And of course, we're seeing this right now. There's technologies that are historically maybe used in borders.

24:24

like uh facial recognition, we know that some of the companies that produce the drones that fly over Gaza also produce the surveillance towers that are at the southern US border that try to find people. And now we're seeing that ISIS using these same kinds of technologies domestically. Right. So I think there's very rational worries that

24:48

that people that are perhaps thinking of themselves as more middle-class may have about these technologies being used against them. And I also think it's important to say that as always, when we tell these kinds of maybe perhaps a bit more science fiction stories that these anxieties often... Let me put this another way.

25:11

The way we talk about these anxieties, it matters whether we talk about these anxieties in terms just of like, I'm worried that this will happen to me. And I think very often when people talk about AI futures, where, know, the AI becomes all powerful and will control us all, you know, in the matrix style narratives that you often find when people talk about AI increasing in power, that is a way that does not produce solidarity with the people to whom that kind of surveillance.

25:40

And that kind of violence is already done today, as opposed to talking about how it spreads out from low rights environments to make more middle class people, what kind of channels these kinds of technological distributions go through, what that has to do with the relation between the military and the police and police militarization, right? If we think about it, if we about these concrete existing people and the channels through which

26:09

techniques of exerting power flow, then we can produce solidarity, right? So that's one of the motivations of writing the book about these more fictionalized and less fictionalized narratives to ask, what about the real effects gets hidden away under particular kinds of narratives? And it's amplified. Yeah, I mean, you mentioned the 70 % bombing accuracy, which

26:39

reminds me of the firebombing of Dresden in World War II, where we're sort of indiscriminately dropping bombs and killing over 100,000 people that maybe are Nazis. We're not 100 % sure. But yeah, a lot of the technology that ISIS is using, you are absolutely right to point out, and it was first perfected out in Gaza and also in China in a lot of respects, tracking since.

27:08

Towards the end of the book, you talk about one of the ways to overcome that. And I just want to make sure that I kind of had this right. And you present this equation of power, which is that the tools that were presented with as AI, as I think you touched on a little bit, but I just want to make sure people get it. The tools that were presented with as AI were presented.

27:32

in an adversarial way. they're presented to us as tools of the oligarchs as opposed to tools of the working class. So what should people know about that power dynamic at play when it comes to these new technologies or products that get hyped up? I think perhaps the most important thing that doesn't happen enough in the public discourse is to kind of go a bit into what

28:01

what is meant when newspapers write about things like productivity. On the one hand, there's the thing that capitalism always does, which is it really does increase productivity, but which we just mean, you know, people produce more of the same stuff in less amounts of time. We probably produce fibers at 100,000 times the speed or something than we did 300 years ago. And in many ways that does

28:29

produced the possibility for liberation from more free time, for more equitable distribution of resources, but then very often it doesn't, right? We also know that garment work for the last 200 years has moved around the planet, but wherever the particular garment work, the sewing, et is done, it is done under miserable conditions, right? That may have been in England in the 1840s.

28:58

It may have been in New York in the 1890s and we know that today it is in Bangladesh, right? So in a sense, the increase of productivity in that particular sense often doesn't reach the workers or reaches only particular workers. And that's one way in which we can think about productivity as a kind of an ambiguous thing of capitalism. But there's another thing that we also call

29:24

in the media discourse is that I think we should view it differently. And that is when you rather than increasing the amount of production that you can do in a particular time of whatever it is that you are producing. When you don't increase that, but you decrease the amount of wages that somebody can command for the particular skills that are needed.

29:50

Right? So we can call this de-skilling, not in the sense of people need fewer skills, but in the sense of like the skills that they have command less bargaining power in the marketplace. People are more easily replaced. And we've seen that in the history of capitalism a lot too. And I think AI really is primarily aimed at those kinds of things. is aimed at

30:16

reducing the bargaining power that people can derive from their skills in order to make people more replaceable, in order to be able to depress wages, etc. Right? In order to gigify the jobs like the hypothetical logo designer that I was talking about in the beginning. And I think unlike the productivity increases, which kind of have this ambiguous sense to it of in a sense, they might be good. And in practice, often

30:45

often the fruits of that productivity increase don't go to everyone, but only to particular people. And I think in contrast to that, that the scaling move is really only about how do the fruits of the total production that we do as a society, how do they get distributed between the people who do the work on the one hand and the people who own the capital that the work is done with on the other hand, right? So that is purely

31:15

antagonistic class politics, right? I don't think there's any reason to not just be so clear and open about that. And if AI is primarily about the second, and not about the first, if it's primarily primarily about the skilling, and not productivity, then we really need to think about this in these antagonistic political terms and think about, um well, how do we if we aren't making our living by owning capital and like

31:43

If your primary income doesn't come from owning stock in meta or Google or whatever, how do we deal with that politically? How do we form a coherent social subject? How do we get together so that we can organize our activities and coordinate them to make a politics that, em yeah, that can address that.

32:10

right, then that may be unions, may be political activities. um And I think in all these contexts, then it's very important that we focus on the structure of knowledge in workplaces, the locus of where is knowledge generated? Where does it sit? Who has control of it? Where does it get shifted around? And what what the particular technologies have to do with how much control over workplaces?

32:37

people have. And that's on the one hand important just because that is what makes the things into the bread and butter issue literally in the sense of like, the easier you are to be replaced, the lower your wages are because you don't have any bargaining power, but also in the sense of can you can you think of your job as something that is that is meaningful where you have control over things where you can derive dignity from the and pride from the skills that you have.

33:05

And I think AI in many ways is an attack on those kinds of things that resembles attacks that have happened in maybe more blue-collar works many times over history. Agreed. Yeah. That's something I fully agree with. In the time ahead of the lab, I did want to get to climate change because you said something that is something that I've been banging this drum for a long time. I was just really happy to see it.

33:29

which is where you point out that the climate change is a solvable problem, but we don't bother to do it because it's not in the best interest of the oligarchs. I, you know, before we close out, I just wanted to hear if you could elaborate a bit on that point for our audience. Yeah, I mean, you can think about capitalism as a kind of system that allocates power, right? Some people get to make investment decisions and others don't. And how does that power get allocated? Well, it's by whoever made

33:58

profitable investment decisions in the past. So then you have more capital that you can reinvest, et cetera. And unfortunately, there are these things called externalities. Sometimes your investment decisions produce costs, but you don't bear the costs. And one of the clear cases is CO2. The companies that make decisions about how our energy is produced, about how much coil we

34:28

coal and oil we dig out of the earth crust, they don't bear the costs that CO2 and climate change produce. Those are borne by everyone else, basically. So markets really can't factor in these costs. And so the people who make those decisions precisely because capitalism is this decision power allocation mechanism won't make those kinds of decisions. They won't.

34:57

disinvest from fossil fuel infrastructure because they already have these giant investments. They don't want to devalue their old investments. even if they did, and I think that's a crucial part, even if they did want to, all that would happen in the world is that their own actual decision power would be crushed because markets ultimately decide who this decision power goes to. if the head of a fossil fuel company

35:25

goes and says, actually fossil fuel is bad. I'm going to close down my company. Then he stops making profit. Then he can't make investment decisions anymore. He goes bankrupt and the oil fields and the refineries go into auction at a bankruptcy. Right? That is how it would go. And so there is in a sense, nobody under capitalism who's simply in a position to make these kinds of decisions.

35:55

I think that's well said. And obviously, it's a situation that we all would like some solidarity through unions and banding together as a working class to fix. Where can we find your work? Where can we buy the book? I think ideally, you ask your local bookstore to order the book for you. mean, A, local bookstores are great. They're very important for communities.

36:23

They usually treat their workers a little less bad than Amazon, which is not a very high bar, but you know, and, you know, if you're interested in the work, the more if somebody asks for a book in a bookstore, the bookstore is much more likely to put the book onto their shelves, which may make it more likely that somebody else will find the book. you know, it's good. It's nice in all the ways to buy for your local bookstore. But of course, you can also just ask your local library, right? Libraries.

36:53

as far as my experience goes are extremely responsive to people asking. So if your local library doesn't have it, go and ask them and you can just borrow it from the library, which is also a great way of getting a book. Agreed. Thank you so much.

37:10

Today, I'd like to highlight a couple of features offered in DuckDuckGo's browser. Both are really important to know about as it relates to artificial intelligence. Now, as you know, DuckDuckGo's search engine does not track what you search for. It also offers helpful AI summaries, similar to what Google has, but here's the key difference. DuckDuckGo's AI summaries are more concise than what Google offers and are more private. I can't stress the last point enough, because a lot of information we enter online is anything but.

37:38

Now let's talk about AI chat models for a second, like Chat GPT. Although we prefer you not use AI chat models, if you choose to do so, Duck.ai allows you to privately access them within the DuckDuckGo browser. DuckDuckGo anonymizes chats, so AI companies don't know who the queries are coming from. Your data is never used to train these chat models. And your conversation with these chat models are completely private. Duck.ai costs you nothing to use, and there's no account required to do so.

38:08

And if you're like us at stupid, sexy privacy and you're anti-AI, you can turn off both Duck.ai and the AI search summaries right within the browser. No harm, no foul. Oh, thanks for reminding me, Bonzo. I meant to include this sentence. Do you think AI slop is ruining the internet? We do too. That's why Duck.go's search engine also lets you filter AI images out of your search results. uh

38:35

I know, those images you saw of Clint Eastwood were very upsetting.

38:42

What? I didn't make those.

38:46

No I didn't! Andrew, can you please come get Bonzo? He's accusing me of creating synthetic media again, and that's really offensive.

39:01

Where was I? So do you want to explore these AI tools without having them creep on you? Well, there's a browser designed for data protection, not data collection, and that's DuckDuckGo. Make sure you visit DuckDuckGo.com and check out today's show notes for a link to download the DuckDuckGo browser for your laptop and mobile device. This episode of Stupid Sexy Privacy was recorded in Hollywood, California.

39:29

It was written by BG Mendelson, produced by Andrew Van Vorse, and hosted by me, Rosie Tran. And of course, our program is sponsored by our friends at DuckDuckGo. If you enjoy the show, I hope you'll take a moment to leave us a review on Pocket Cast, Apple Podcast, or wherever you may be listening. This won't take more than two minutes of your time, and leaving us a review will help other people find it. We have a crazy goal of helping 5 % of Americans get 1 % better at protecting themselves from fascists and weirdos.

39:57

Your reviews can help us reach that goal, since Leaving One makes our show easier to find. So please take a moment to leave us a review and I'll see you right back here next Thursday at midnight. After you watch Rosie Tran Presents on Amazon Prime, right? Bonzo, I wish that you'll have many more birthdays just like this one. With those you love and trust around you always to share your happiness. And I wish that you'll get a chance very soon to prove that being loved and looked after like a human being.

40:27

has made you feel like a human being and that if love can do that to you then it ought to be able to make some other human beings human beings.